|

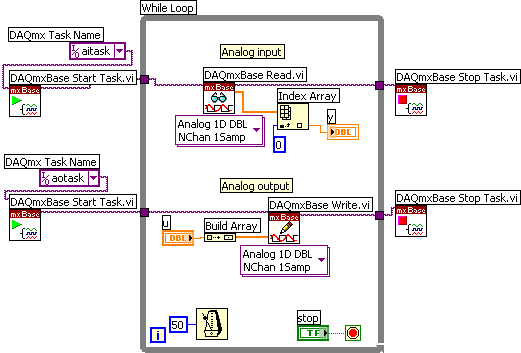

Overview This VI takes one image from a camera and tracks the object using pattern matching by selecting the ROI or object. Description This VI does pattern matching to track the object. The user is able to take one image coming from the camera and then select the ROI or object that they would like to track. Requirements • LabVIEW Base Development System 2012 (or compatible) • Vision Development Module Steps to Implement or Execute Code • Unzip the attached folder “Object Tracking V2 2012 NIVerified.zip” • Open the VI 'Object Tracking V2 2012 NIVerified.vi' • Run the program Additional Information or References VI Front Panel VI Block Diagram **This document has been updated to meet the current required format for the NI Code Exchange.**. Unfortunately, I have not had time to implement this, but my idea was to do something similar to what you suggest.

NI Vision Development Module 2009 Readme. This file contains important information about National Instruments Vision Development Module and is organized into the following sections. Helpful Information: What is a Crack? The word 'crack' in warez context means the action of removing the copy protection from commercial software. It would involve geometric pattern matching and have an array of template images. The program would 'learn' new template images programmatically based on the first image selected by the user. This would allow the program better determine the object at different projection or angles. You would need to manage the array of template images so that it does not grow to large if you are programmatically adding to it. Also, you would need to make sure the images you are adding to the array are of good quality so it does not negatively impact the geometric matching. I hope this helps, but the short answer is yes you can implement a better tracking algorithm, but it will take some editing of the current program. I have implemented a similar tracking technique, however, it seems that this is not really 'tracking,' but rather 'matching.' It appears to me that the image is matched in each respective frame rather than tracked between frames. When I utilize this technique on a UGV in the field, it can only identify the correct patterns at the distance that the template was recorded. I feed the offset between the x coordinate pixel location of the centroid of the tracking target, and the longitudinal axis of the vehicle, to a PID controller that actuates a steering servo. As the vehicle hones in on the target, and the distance between the camera and the target decreases, the target is lost, because the template image size becomes larger (occupies more pixels). If the distance between the camera and the target will remain constant, this approach is a good choice.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

March 2019

Categories |

RSS Feed

RSS Feed